AI Ethics: A Crash Course

A quick, practical overview to help you understand—and avoid—the ethical pitfalls of AI.

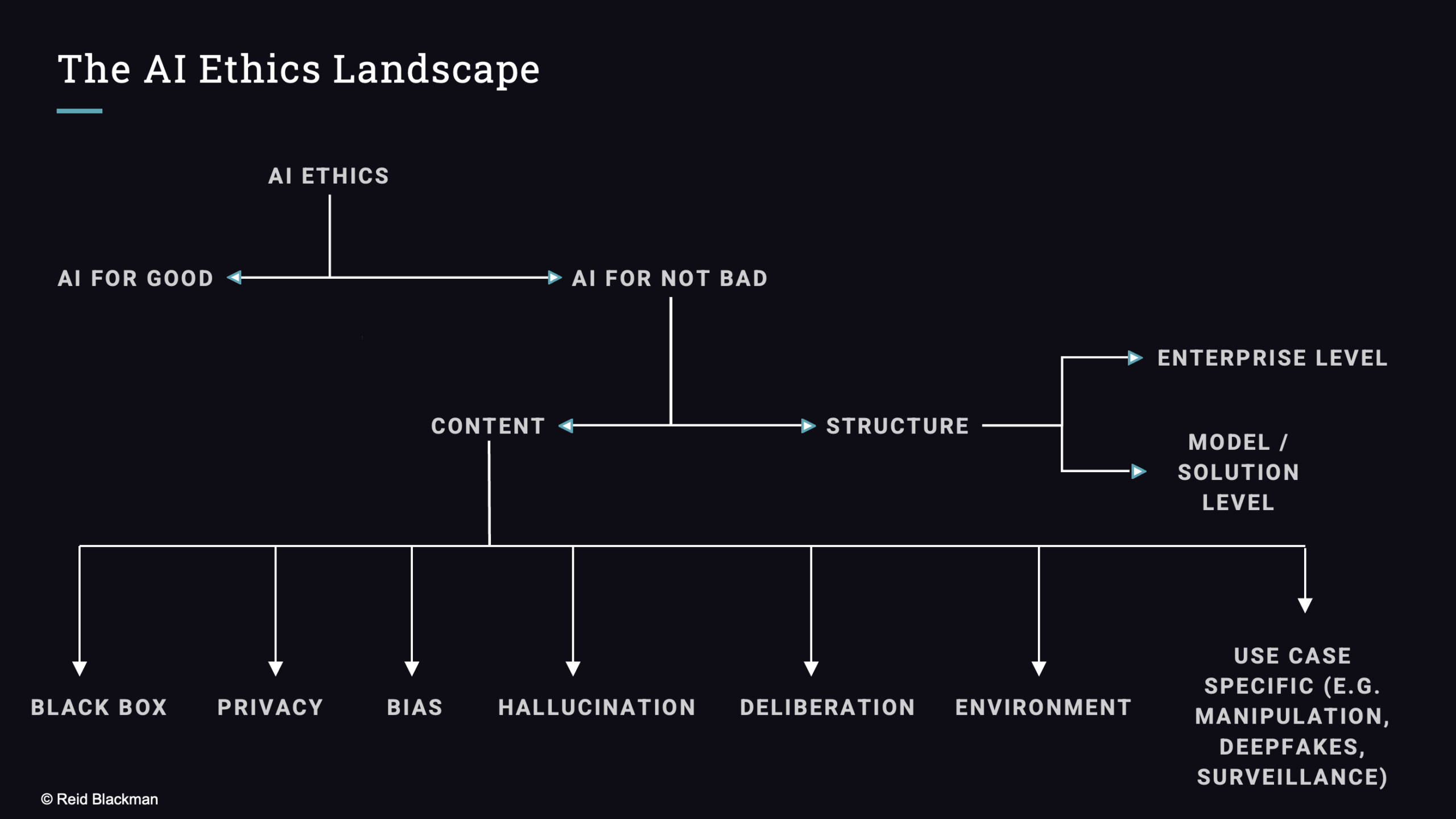

Crash Course on AI Ethics

If you don’t know much about AI ethics and you want a high level summary of the big issues, this is the page for you. If you like this and you want to go deeper, the book is the place for that (unless you want to bring it to your team, in which case a workshop might make sense).